Last updated on December 3rd, 2025 at 12:56 pm

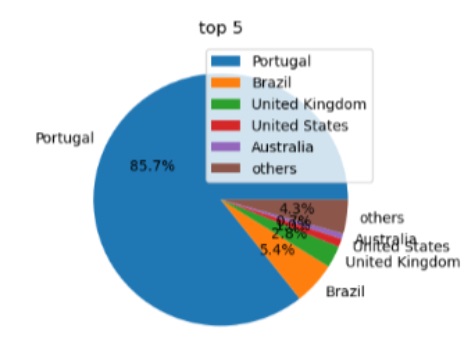

Local elections (“autárquicas”) were held in Portugal on 12 October 2025, and Arquivo.pt compiled a special collection of electoral content published on the web, resulting in 3.5 terabytes of information for research and academic work.

440 search terms were used to obtain 43,000 page addresses, along with the websites of parishes, municipalities, and political parties.

Here we explain the various steps involved in collecting data on the elections:

- preparation of a list of search terms

- search (using Google and the Google Rank Checker browser extension)

- recording (using Heritrix and Browsertrix-crawler)

- integration into Arquivo.pt

- provision of data sets for research

How to identify election-related content on the web

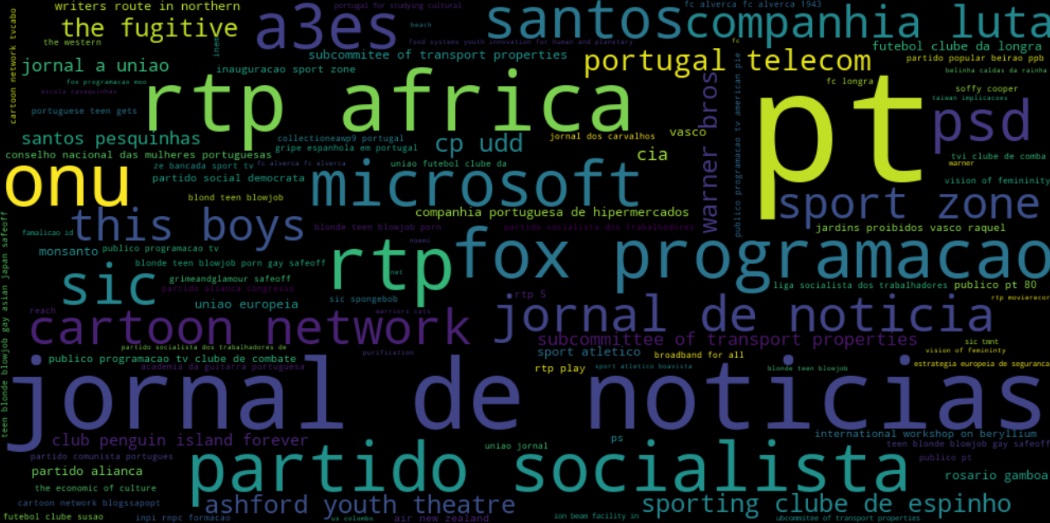

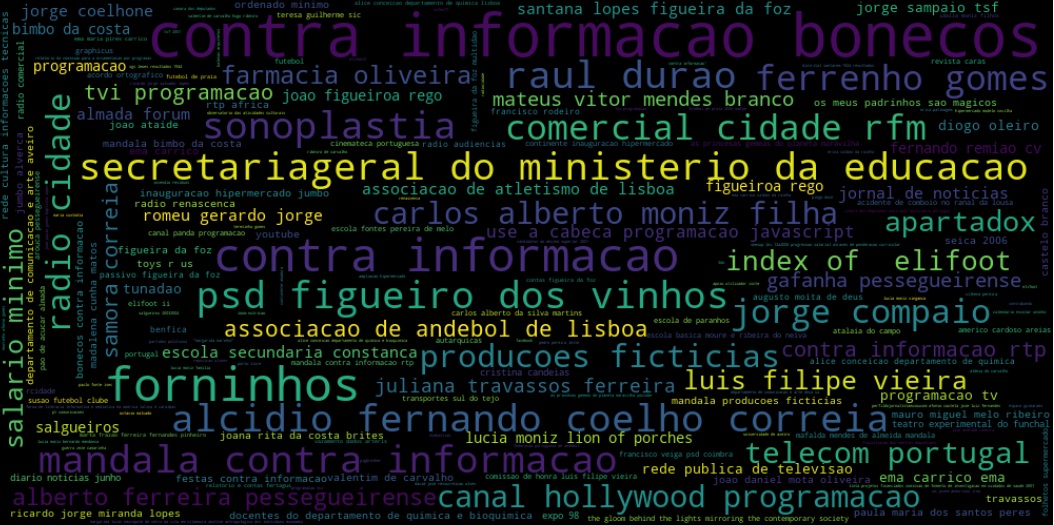

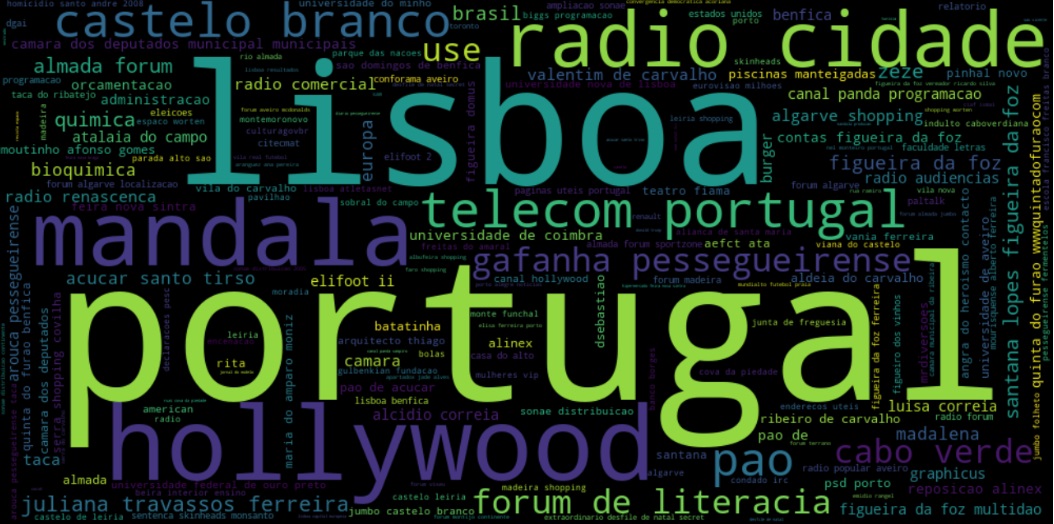

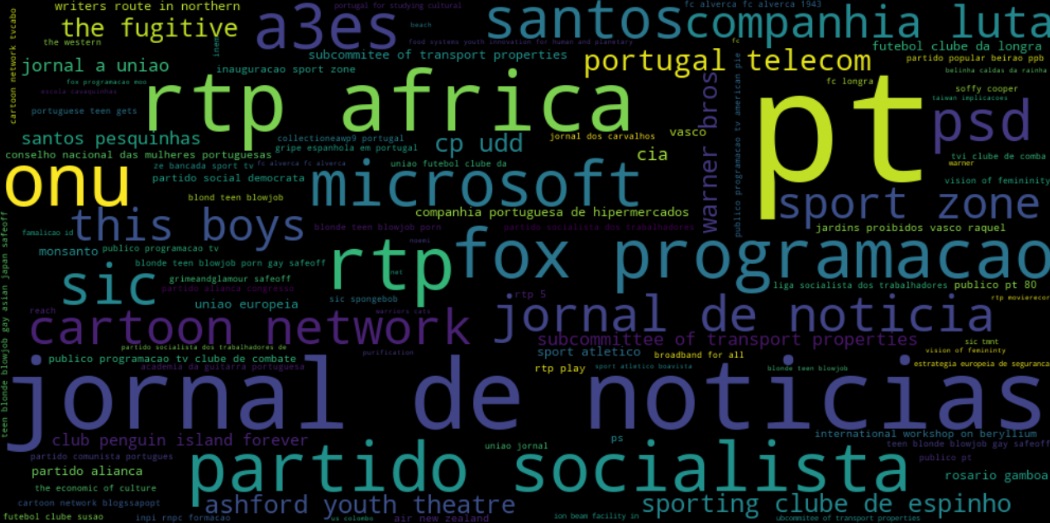

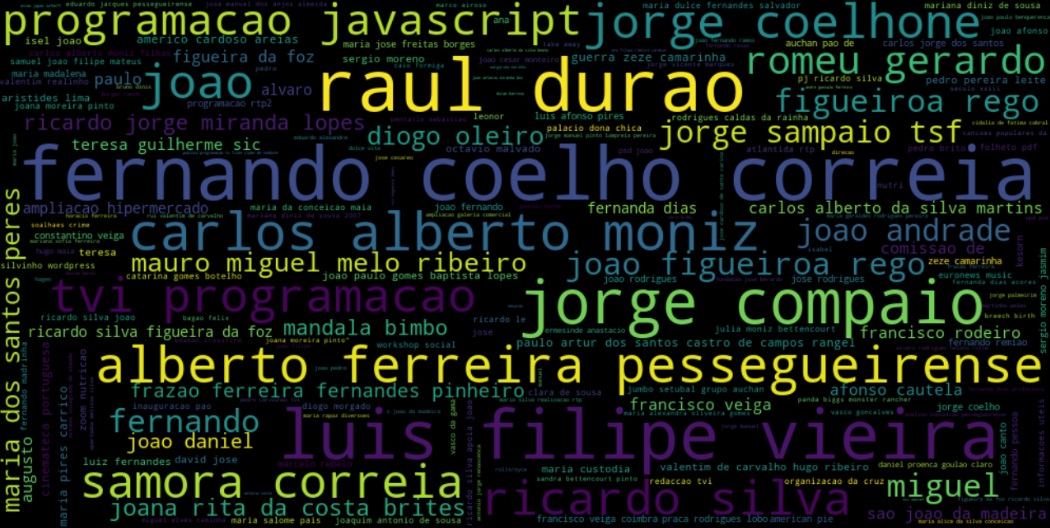

To identify content related to the elections, we used a list of search terms, for example, “eleições autárquicas 2025″, “habitação autárquicas 2025″, “promessas “autárquicas 2025”. After the elections, other terms were added, such as “vitória autárquicas 2025”, “resultados autárquicas 2025”.

The search terms are words that aim to include various topics related to the elections, such as politics, society, economics, among others, media, candidate names, and regions of the country.

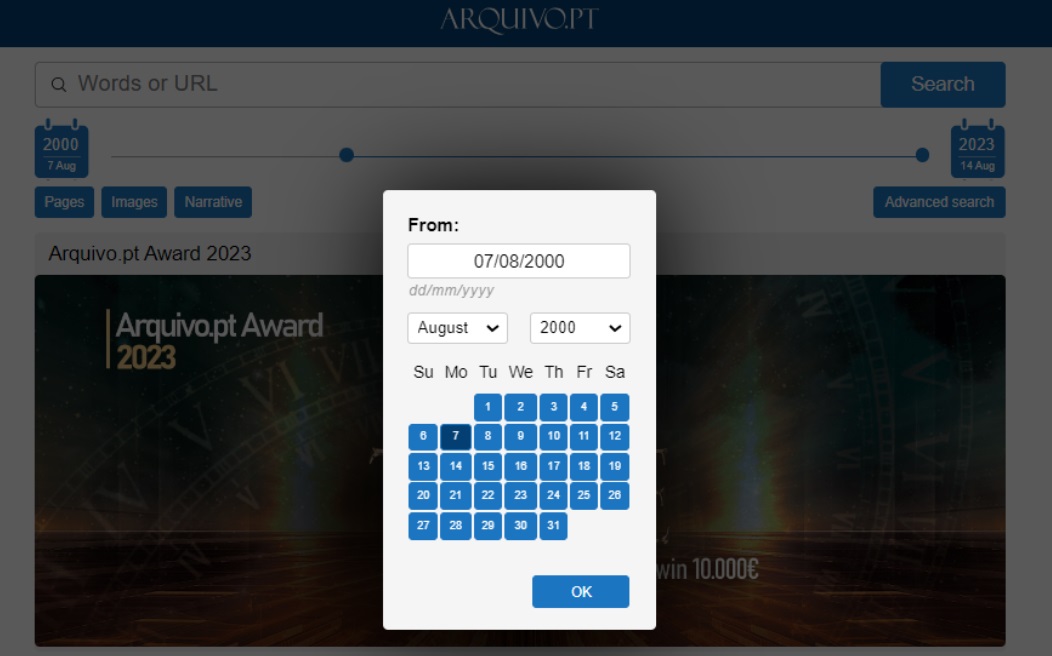

In the collection on local elections, the Google search engine was used to perform each search. Some advanced search parameters were used: number of results (&num=100), news results (&tbm=nws), image results (&udm=2). After the elections, the results were restricted using the “last week” filter.

In each search, the addresses of the search engine results pages (SERP) were extracted using the Google Rank Checker,Keyword SERP Ranking Tool. This tool works as a browser extension that exports the list of results in JSON format.

In total, 1,400 searches or queries were performed on Google (800 before the elections and 600 after the elections). Finally, the results of all searches (.json files) were compiled into a document and converted into a table. Each result contains various data, such as relevance, the domain from which it was extracted, the link or URL, the title of the publication, the date of the search, and the query.

It should be noted that the list obtained represents only a small portion of everything published on the Web about the elections. In addition, the same list contains results unrelated to the purpose of the collection (false positives) and some repetitions. To save time, no lines were deleted.

This exercise resulted in 45,000 pages (seeds) with news, articles, and publications related to the elections to be used in the collection process by Arquivo.pt. This dataset, 2025 Local Elections, is available on the open data platform Dados.Gov.

A list of parish councils, municipal councils and political parties with their respective websites has also been added.

How the contents were recorded and limitations to be taken into account

The addresses obtained before and after the elections were recorded in two web crawlers, Heritrix and Browsertix-crawler . These tools record pages from a given starting address (seed), then follow the links there, up to a certain limit, in this case a maximum of five times (five hops).

Heritrix was used for an initial generic collection of pages, as it is capable of quickly processing lists containing thousands of addresses: 25,858 URLs before the elections and 17,258 URLs after the elections. It generated 541 gigabytes of information.

The Browsertix-crawler was used to improve the collection of dynamic content. This crawler’s recording is browser-based. Recording takes longer, but captures content that would otherwise escape collection.

The collection was carried out using the Browsertix-crawler, in stages, first by recording the parish websites in August and September, and then, between October 9 and November 5, by recording news about the elections and 8,850 social media posts. It generated 2.9 terabytes of information.

As for the limits of the collection, we were able to identify a few: access blocked by some websites that defend themselves against automatic access, despite the Arquivo.pt agent being identified; social media content behind a login that cannot be reproduced on Arquivo.pt; videos that cannot be reproduced due to their format.

How and when to access data for research and work creation

EAWP48 is the identifying name of the collection that will bring together content on the Local Elections of 12 October 2025. It is described in the list of collections at Arquivo.pt.

Nos próximos meses, o conteúdo será indexado e os índices CDXJ ficarão disponíveis para os investigadores na lista de datasets do Arquivo.pt.

In the coming months, the content will be indexed and the CDXJ indexes will be available to researchers in the Arquivo.pt dataset list.

After one year, the collected content will be accessible through the Arquivo.pt search engine. Anyone will then be able to search election pages by text or image.

For further information, please contact us.

Data collected on the 2025 Local Elections

- Search terms list (queries) (PDF pre-elections, PDF pos-eleictions)

- Results list pre-elections (SERP, queries and seeds)

- Results list post-elections (SERP, queries and seeds)

- List of parish councils (websites)

- List of municipal councils (websites)

- List of political parties (websites)

Find out more about electoral recalls from previous years

- Lists of seeds published at the open data portal Dados.gov

- Portuguese Legislative Elections 2025 had a special collection by Arquivo.pt

- 2024 European and Portuguese elections in special Arquivo.pt collections

- Collections related to elections: EAWP7, EAWP9, EAWP16 EAWP17, EAWP23, EAWP26, EAWP37, EAWP39, EAWP40, EAWP45, EAWP46

- We archived the Web pages of the Portuguese Parliamentary Elections of 2015!

- We archived the Web pages of the Portuguese Presidential Elections of 2016!

- Cross-lingual collection about the 2019 European Elections is available

- Portuguese municipal elections 2021 preserved by Arquivo.pt

- Cross-lingual research datasets on 2019 European Parliamentary Elections (Use case)

- 2019 European Parliamentary Elections – CoNLL-U texts (Use case)

- 2019 European Parliamentary Elections – Raw texts (Use case)

- Bing Search API script (service discontinued in August 2025)